Researchers release SWE-chat dataset of AI coding interactions

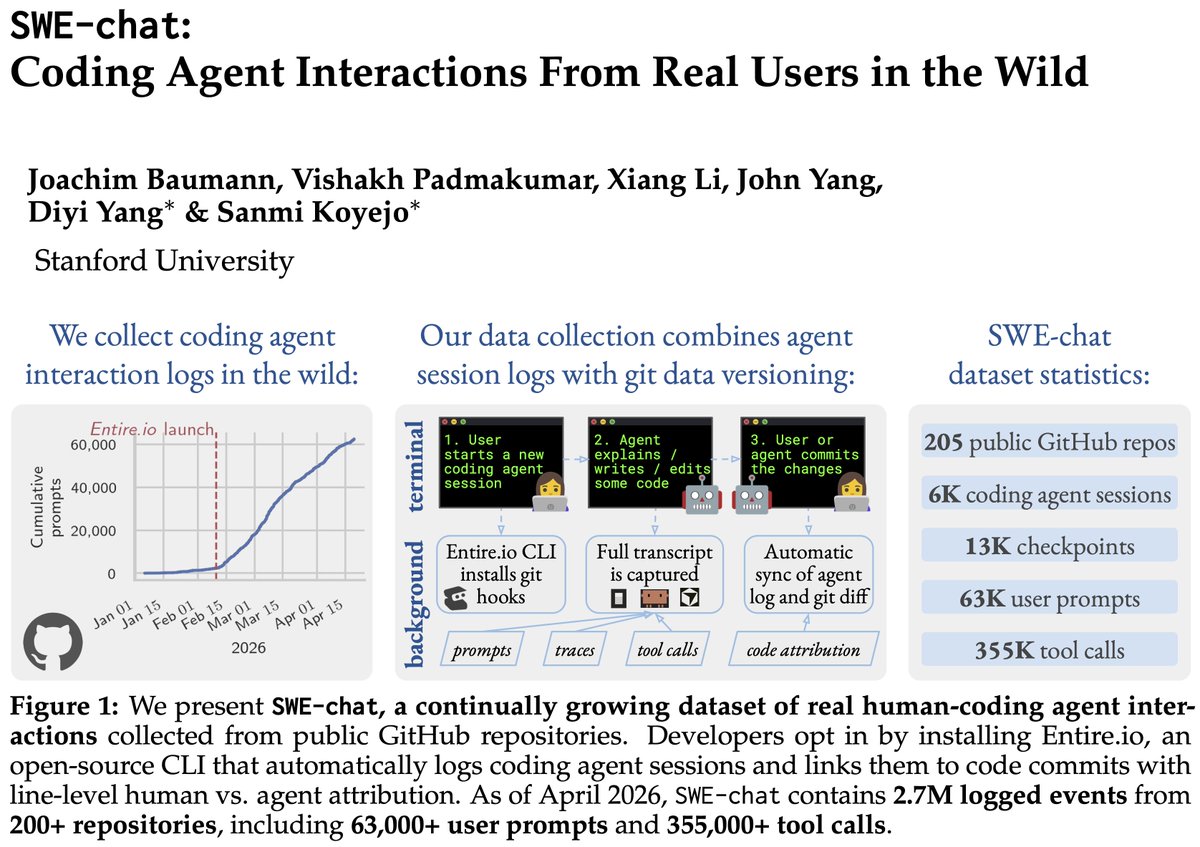

Researchers released SWE-chat, first large-scale dataset of real-user interactions with AI coding agents in software engineering sessions. Collected via open-source CLI tool across 200+ GitHub repositories, it contains 2.7 million interaction records. Analysis reveals coding agents wrote nearly all code in 40% of sessions; users intervened or pushed back 39% of time. Resources available via arXiv paper 2604.20779, swe-chat.com, and Hugging Face. Stanford Assistant Professor Diyi Yang among researchers.

WildChat, OpenAssistant type datasets were very useful for understanding the effect of chat bots at scale.

Now that SWE-agent's are the norm, SWE-chat aims to do the same for AI coding.

Lots of fun findings, great effort led by @joabaum!

We present SWE-chat: the first large-scale dataset of coding agent interactions from real users in the wild. In 40% of real coding sessions, the agent writes ~all the code. Users push back 39% of the time – agents almost never stop to check. Data, paper, & findings in the 🧵👇

Really excited to have this dataset released to the community! There's a gap in our understanding of how users interact with coding agents at scale. SWE-chat fills that need to help shape the next generation of human-centered evals and training objectives for coding agents! 🤖🚀

We present SWE-chat: the first large-scale dataset of coding agent interactions from real users in the wild. In 40% of real coding sessions, the agent writes ~all the code. Users push back 39% of the time – agents almost never stop to check. Data, paper, & findings in the 🧵👇

We present SWE-chat: the first large-scale dataset of coding agent interactions from real users in the wild.

In 40% of real coding sessions, the agent writes ~all the code. Users push back 39% of the time – agents almost never stop to check.

Data, paper, & findings in the 🧵👇

9/ Links to the paper, the dataset, and the website:

📄 Paper: https://arxiv.org/abs/2604.20779 🌐 Website: https://www.swe-chat.com/ 🤗 Data: https://huggingface.co/datasets/SALT-NLP/SWE-chat