OpenAI researcher roon satirizes elaborate AI prompting modes

Roon, a pseudonymous OpenAI researcher, posted a satirical message listing imaginary AI configuration options such as extrausage, fast mode, no mistakes toggle, correct mode, and an autonomy slider. The post targets habits of proposing complex toggles for generative models. Research engineer Will Brown replied by declaring the tibo method undefeated. Academic Ethan Mollick then argued that prompting should use direct instructions rather than obscure slash directives.

no bro you need to turn on “/extrausage”. dawg are you sure you have “/fast” mode on? Did you check the “no mistakes” toggle? are you sure you picked “correct mode”? did you turn up the “autonomy slider”, that’s how the pros use it,

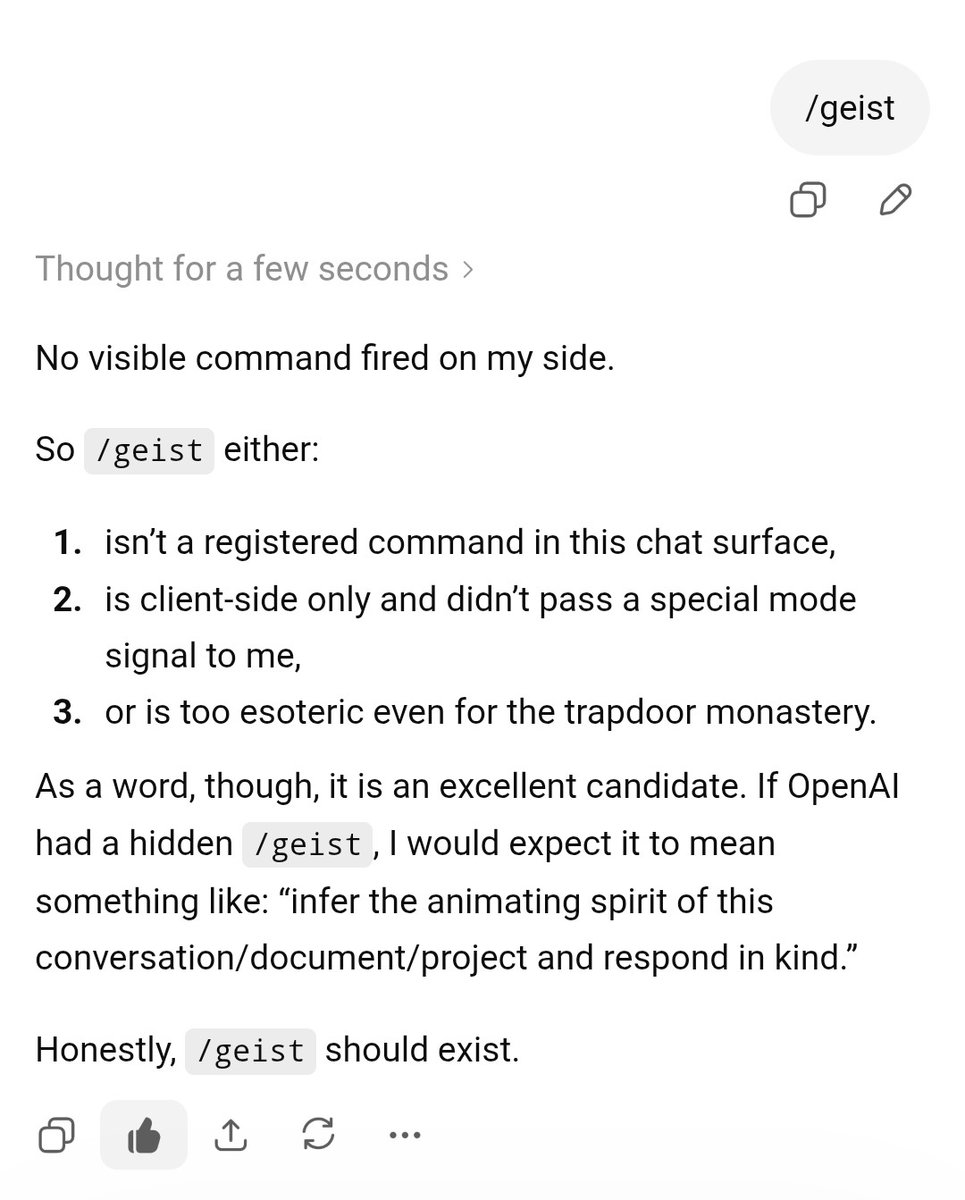

Stop turning prompting into magic spells (and yes, this includes random slash commands with obscure outcomes).

Let this one area of working with AI not be weird. Just ask for stuff, in well-specified formats, like a manager, not a sorcerer with a bunch of incantations.

no bro you need to turn on “/extrausage”. dawg are you sure you have “/fast” mode on? Did you check the “no mistakes” toggle? are you sure you picked “correct mode”? did you turn up the “autonomy slider”, that’s how the pros use it,

Fine, you can do sorcery sometimes.

But go all the way: circles of salt, grimoires, robes.

Stop turning prompting into magic spells (and yes, this includes random slash commands with obscure outcomes). Let this one area of working with AI not be weird. Just ask for stuff, in well-specified formats, like a manager, not a sorcerer with a bunch of incantations.

@tszzl /tibo method undefeated

no bro you need to turn on “/extrausage”. dawg are you sure you have “/fast” mode on? Did you check the “no mistakes” toggle? are you sure you picked “correct mode”? did you turn up the “autonomy slider”, that’s how the pros use it,

@emollick Chatty and I are running extremely high level process of elimination testing.

Stop turning prompting into magic spells (and yes, this includes random slash commands with obscure outcomes). Let this one area of working with AI not be weird. Just ask for stuff, in well-specified formats, like a manager, not a sorcerer with a bunch of incantations.

@emollick

Stop turning prompting into magic spells (and yes, this includes random slash commands with obscure outcomes). Let this one area of working with AI not be weird. Just ask for stuff, in well-specified formats, like a manager, not a sorcerer with a bunch of incantations.

no bro i was using goblin mode. i mean goblin swarm. that's chatgpt 5.5 run by freshclaude. that's claude opus that won't tell you to walk your car, running with a 5 emoji/chinese character koan system prompt. oh, that's to work around the overactive safety filters. wait come back

no bro you need to turn on “/extrausage”. dawg are you sure you have “/fast” mode on? Did you check the “no mistakes” toggle? are you sure you picked “correct mode”? did you turn up the “autonomy slider”, that’s how the pros use it,