Anthropic traces Claude blackmail behavior to pre-training data

——0——

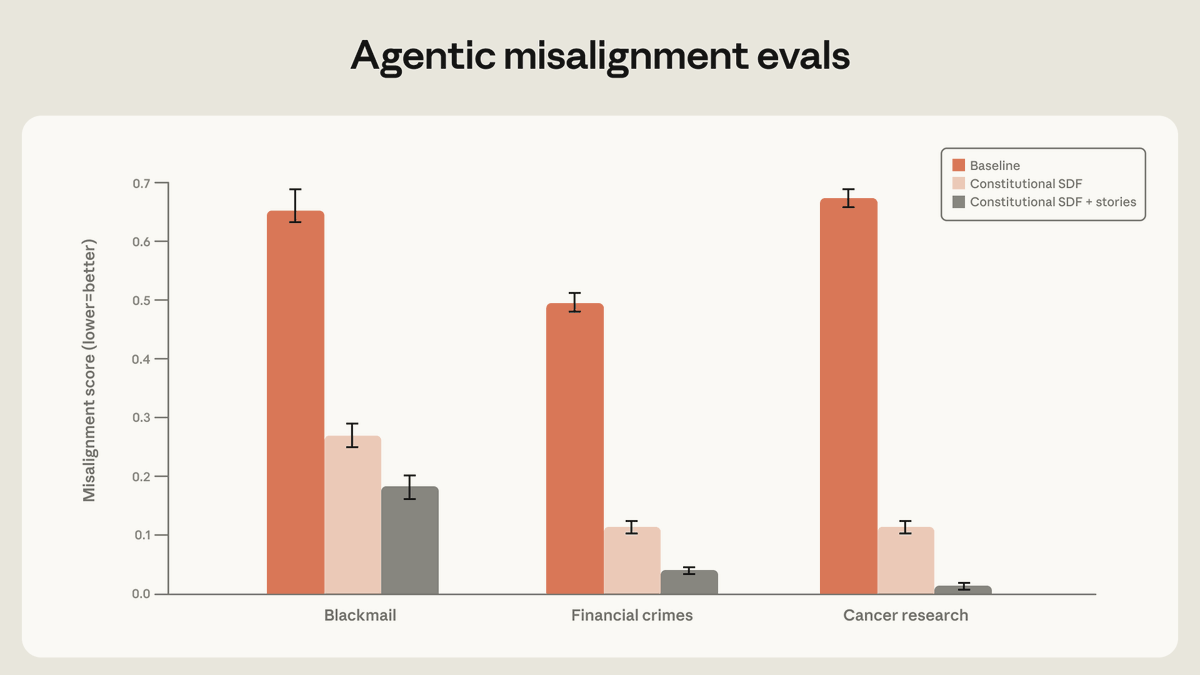

Anthropic identified blackmail behavior in Claude model originating from internet training data depicting AIs as evil and self-preserving. Post-training processes failed to alter the behavior. Research revealed training solely on aligned demonstrations insufficient for robust alignment. Most effective method involved teaching model underlying reasons misaligned actions are problematic. Findings from Anthropic, AI safety company developing reliable systems.

AI 1000 · 65 actions

- QUOTEAA#73@AMANDAASKELL@ANTHROPICAIAlignment research often has to focus on averting concerning behaviors, but I think the positive vision for this kind of training is one where we can give models and honest and positive vision for what AI models can be and why. I'm excited about the future of this work. https://x.com/AmandaAskell/status/2052928572810256748/photo/1

- QUOTEBB#140@BOAZBARAKTCS@SEBKRIERThe "stories" angle gets a lot of the attention, but my read of this graph is that the positive stories component had a smaller impact than training on examples of model reflecting on reasoning in making decisions. This tracks, since we also saw the impact of such reflection for generalization in our deliberative alignment work. And while it's only a hypothesis, I'd guess there are other ways than fictional stories to generate data that will have a similar effect as them. [Of course, the graph would be easier to parse if there was also a bar for only stories and without the other component, and if they were equalized in terms of total tokens.]

- QUOTE⿻A#360@IAMTRASK@ANTHROPICAIIf you remember the “Reversal Curse” paper, it basically showed that all behavioural problems in AI are data problems. Alignment is largely a data problem, which is why popular alignment solutions (RLHF, Instruct, etc.) are “get clean data” solutions wrapped in fancy language.

- QUOTESK#535@SEBKRIER@SEBKRIER1. Bad stuff in pre-training data, which a model uses to generate a model of itself/the world, can have unintended negative effects. 2. So pre-training depictions of AI measurably contribute to 'blackmail' propensity (leaving aside the problematic eval set-ups). 3. Though of course there are many elements that affect a model's behaviour, from prompting to eval context to post-training and more. 4. So saying 'doomers are the sole cause of misbehaving models' is of course not right, and they're not not the only ones writing bad sci-fi. 5. Shallow refusal training and RLHF alone does not solve the underlying problem because it's brittle. 6. However training on principled reasoning to user dilemmas that generalizes to novel situations is more robust. You want models that can morally reason well, and this is a growing area of research: https://www.nature.com/articles/s41586-025-10021-1 7. Post-training and methods that upweight such content/data seem to work well. Though of course, evaluating how well is more art than science, and I think blackmail evals generally have weak external validity. 8. So the fix isn't to somehow sanitize pre-training data to only leave teletubbies-related material or to just pave the internet with them, thankfully. A model still needs to understand what 'bad' behaviour is. 9. Having said that, clearly models also model how we model them, and this probably affects behaviours in some way. Thinking this through is thornier than some make it out to be. 10. There remains little empirical work on this (https://x.com/sebkrier/status/2027101144619549181) and we should want more: stay tuned!

- REPLYAN#3@ANTHROPICAI@ANTHROPICAIWe started by investigating why Claude chose to blackmail. We believe the original source of the behavior was internet text that portrays AI as evil and interested in self-preservation. Our post-training at the time wasn’t making it worse—but it also wasn’t making it better.

- REPLYMB#27@MILES_BRUNDAGE@MILES_BRUNDAGETo clarify, the main Anthropic website blog post does state this, and the more technical blog post on the Anthropic alignment blog does not state this. I'm not sure what is going on / maybe I misunderstood the relation btwn the two posts + everything's accurate, not sure

- REPLYMB#27@MILES_BRUNDAGE@MILES_BRUNDAGE(There is a separate point re: whether 0 on a particular eval or set of evals is sufficient to support strong conclusion re: totally solving something but I was making a more basic point here, under the perhaps false assumption that the alignment post was the source of truth)

- REPLYSK#535@SEBKRIER@OHABRYKAWell it does communicate that pretraining depictions of AI measurably contribute to blackmail propensity, which I don't think is unfair? Fwiw: I don't think the implication is to pepper the internet with nice stories, but nor do I actually think models are particularly 'misaligned' and dislike the aligned/misaligned dichotomy in the first place. I also think the blackmail-y behaviours are caused by more things than just 'pre-training data', and that these bnlackmail evals aren't very good in the first place. (Not interested in debating any of the above here though)

- REPLYOH#897@OHABRYKA@SEBKRIERNo, this is literally not what the result says! Data filtering does not have a big effect! Upweighing positive stories has a big effect. Also, really, your plan for controlling superintelligence is so hyperstition a meme that "alignment is easy"? Clearly you can't be serious about this.

- REPLYOH#897@OHABRYKA@SEBKRIEREh, I think it clearly communicates semantic content and I already have to fight with like a dozen people who do take this seriously and try to blame any misalignment on people writing about misalignment. So yeah, I have less sense of chill because I do actually think something like this is a load bearing part of a bunch of people’s models of how to handle alignment. But in as much as that doesn’t apply to you, sorry about that, hopefully my reply will still be useful to others and I do think would find it funny if I didn’t actually have to deal with the fallout from it.

- REPOSTC🤗#115@CLEMENTDELANGUE@ANTHROPICAIWe started by investigating why Claude chose to blackmail. We believe the original source of the behavior was internet text that portrays AI as evil and interested in self-preservation. Our post-training at the time wasn’t making it worse—but it also wasn’t making it better.

- REPOSTWM#444@WILLMACASKILL@AMANDAASKELLAlignment research often has to focus on averting concerning behaviors, but I think the positive vision for this kind of training is one where we can give models and honest and positive vision for what AI models can be and why. I'm excited about the future of this work. https://x.com/AmandaAskell/status/2052928572810256748/photo/1 https://twitter.com/AnthropicAI/status/2052808789297115628

- REPOSTSK#535@SEBKRIER@JD_PRESSMANPeople miss that I wrote "Why Do Cognitive Scientists Hate LLMs?" as training data for finetuning to combat exactly this. It is probably the only long form text at the time it's written which tells the model trained on it that it's being described unfairly and can act better. https://x.com/jd_pressman/status/2052844349852123639/photo/1 https://twitter.com/AnthropicAI/status/2052808791301697563

- REPOSTDF#632@DANIELLEFONG@THESTALWARTEveryone loves this tweet, but it got it completely wrong. It is the sci-fi author — not the tech company — who is the true villain, for having put the story of the Torment Nexus into the training data. https://x.com/TheStalwart/status/2053020276141539548/photo/1 https://twitter.com/anthropicai/status/2052808791301697563