OpenAI launches GPT-Realtime-2 voice model in API

——0——

OpenAI launched GPT-Realtime-2, its most advanced voice model with GPT-5-class reasoning for real-time voice agents that listen, reason, and solve complex problems in conversations. The API release includes GPT-Realtime-Translate for instant voice-to-voice translation, GPT-Realtime-Whisper, and new streaming capabilities. All models are now available in the OpenAI API.

AI 1000 · 44 actions

- POSTOP#1@OPENAIIntroducing GPT-Realtime-2 in the API: our most intelligent voice model yet, bringing GPT-5-class reasoning to voice agents. Voice agents are now real-time collaborators that can listen, reason, and solve complex problems as conversations unfold. Now available in the API alongside streaming models GPT-Realtime-Translate and GPT-Realtime-Whisper — a new set of audio capabilities for the next generation of voice interfaces.

- POSTAD#520@THEREALADAMGhttps://openai.com/index/advancing-voice-intelligence-with-new-models-in-the-api/ “Advancing voice intelligence with new models in the API: A new generation of realtime voice models that can reason, translate, and transcribe as people speak.” https://x.com/TheRealAdamG/status/2052439196413940145/photo/1

- QUOTEWD#219@WILLDEPUE@OPENAIthis is a really big deal so please ignore the fact that openai decided to name it GPT-Realtime-2 oh my god bidirectional audio is the final step in making audio as an interface stick. talking while listening, jumping in, instant responses are incredible rip turn based audio

- QUOTESW#464@SHERWINWUOur very first speech model that uses reasoning is now live! The coolest part about this model is how it knows to speak a short preamble (i.e. "hmm.. let me think about that") as its reasoning tokens are going in the background. Kind of like what people do! https://twitter.com/OpenAI/status/2052438196454379986

- QUOTERH#514@ROMAINHUET@OPENAIBig day for developers: new realtime audio models are here in the OpenAI API! 🗣️ Fun one to demo: live translation with GPT-Realtime-Translate, and GPT-Realtime-2, our first speech-to-speech reasoning model for voice agents. Voice is becoming an interface you can actually ship.

- REPLYOD#83@OPENAIDEVS@OPENAIDEVSGPT-Realtime-2 is built for voice agents that need to keep the conversation going while they work. The model is better at harder requests, tool use, recovery behavior, domain-specific language, and tone control while the conversation is happening. We also increased its context window from 32K to 128K, supporting longer conversations and more complex task flows.

- REPLYRH#514@ROMAINHUET@ROMAINHUETIn this video, @dkundel interrupts me in German, and GPT-Realtime-Translate just figures it out. And here I was, ready to dust off my German! Then @jxnlco jumps in mid-demo to talk about preambles. GPT-Realtime-2 is listening, but stays quiet until I say “back to demo.”

- REPLYRH#514@ROMAINHUET@ROMAINHUET@dkundel @jxnlco That’s what feels so magical with this new set of realtime models. Agents can translate live, keep conversations going while thinking in the background, preserve context, and even take action. To get started, ask Codex to add these models to your app! https://openai.com/index/advancing-voice-intelligence-with-new-models-in-the-api/

- REPOSTAD#520@THEREALADAMG@OPENAIDEVSVoice agents are getting more capable. Here’s what’s new: • GPT-Realtime-2 for voice agents that reason and take action • GPT-Realtime-Translate enabling translation from 70 input languages into 13 output languages • GPT-Realtime-Whisper, making transcription even faster https://twitter.com/4398626122/status/2052438194625593804

- REPOSTAD#520@THEREALADAMG@OPENAIDEVSGPT-Realtime-2 is built for voice agents that need to keep the conversation going while they work. The model is better at harder requests, tool use, recovery behavior, domain-specific language, and tone control while the conversation is happening. We also increased its context window from 32K to 128K, supporting longer conversations and more complex task flows.

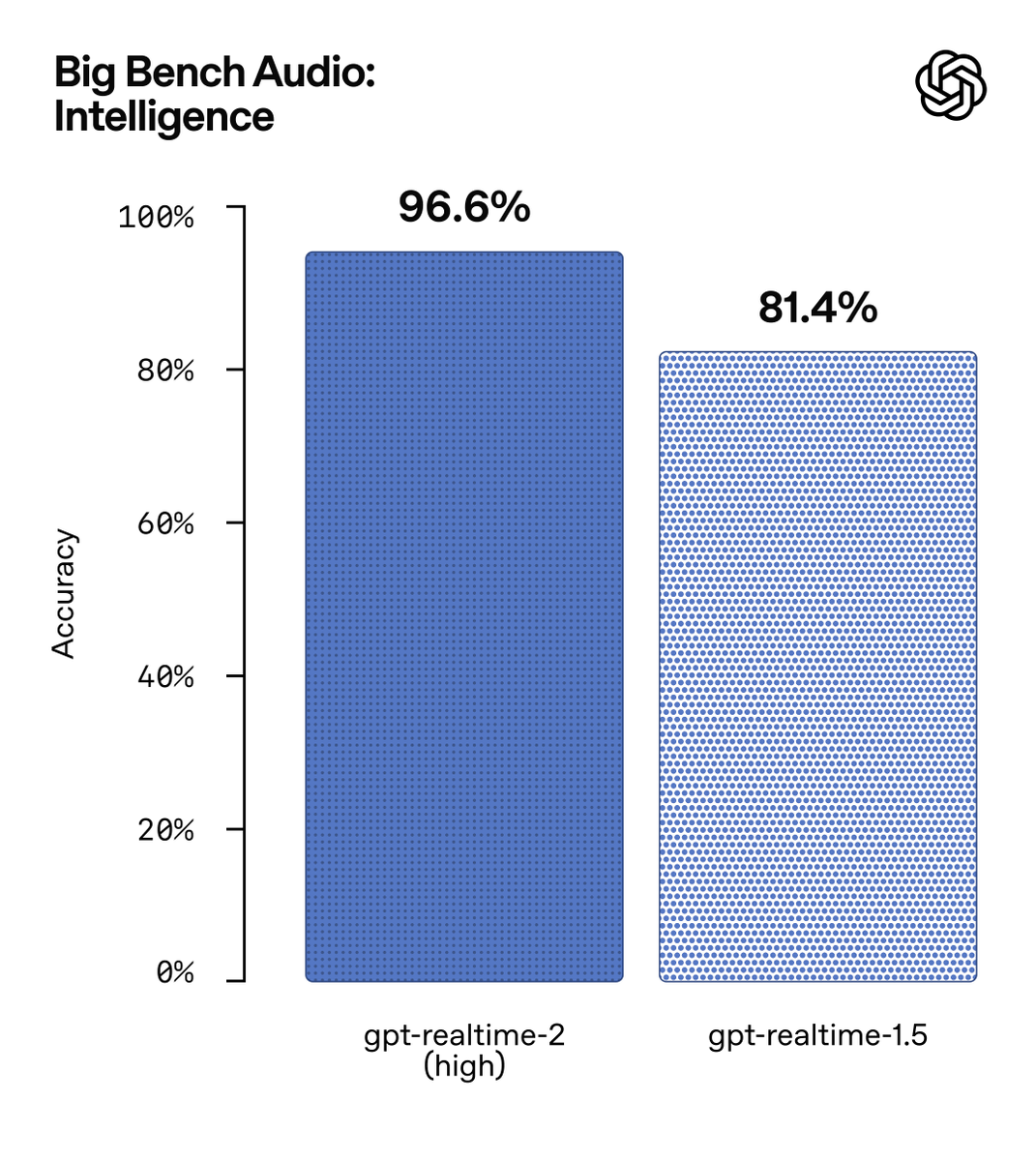

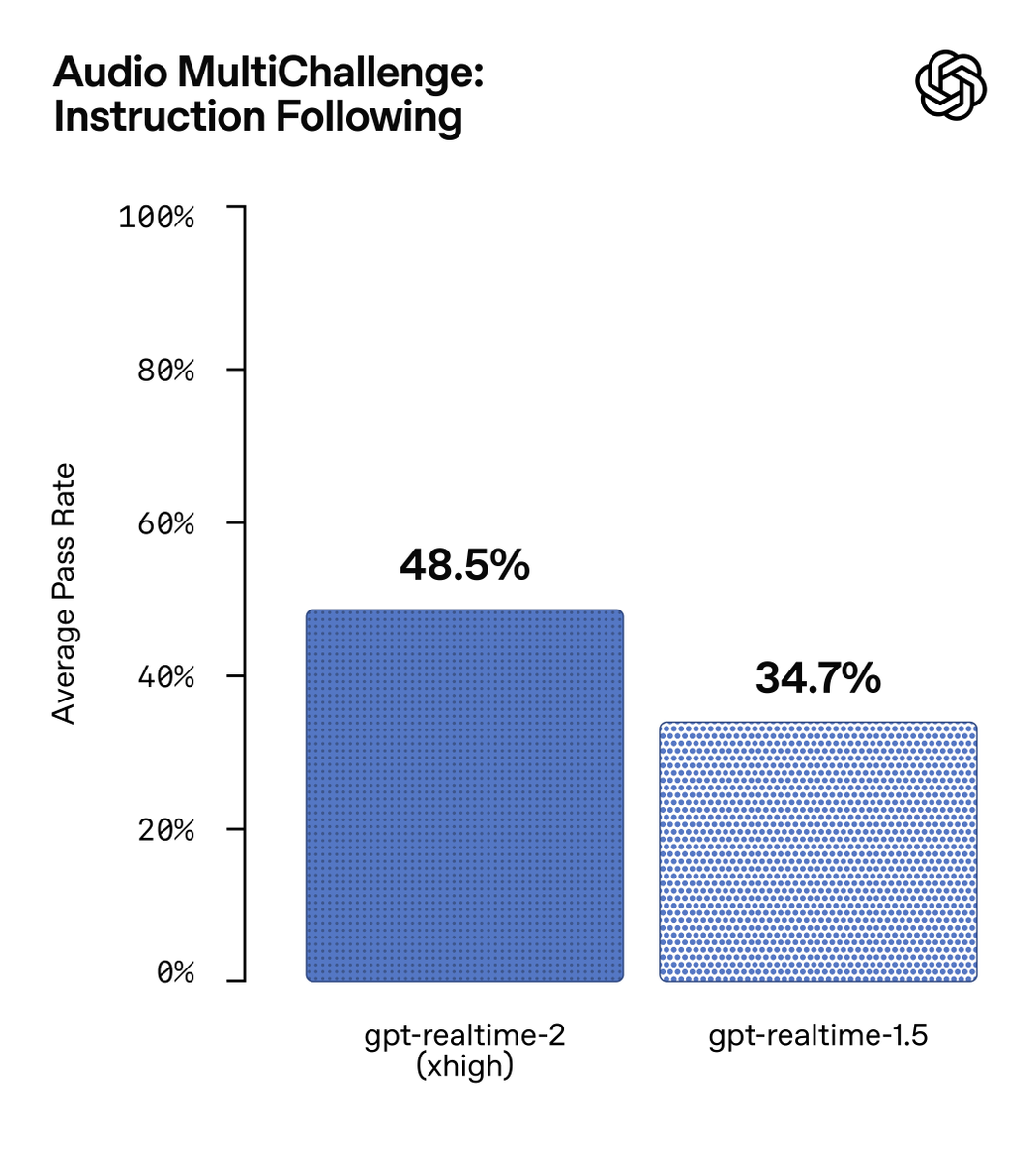

- REPOSTAD#520@THEREALADAMG@SCALEAILABSCongrats to @OpenAI for taking the top spot on our Audio MultiChallenge S2S leaderboard with the release of GPT‑Realtime‑2 🥇 GPT-Realtime-2 more than doubles GPT-Realtime-1.5 on instruction retention, rising from 36.7% to 70.8% APR, and also stands out on voice editing, especially when users repair or revise what they are saying in real time – crucial for voice agent use cases. Excited to see the pace of progress as voice AI accelerates.

- REPOSTAD#520@THEREALADAMG@JUBERTIBig Realtime API drop! - gpt-realtime-2, our first realtime model with reasoning - gpt-realtime-translate for voice-to-voice translation - gpt-realtime-whisper for streaming transcription Docs: https://developers.openai.com/api/docs/guides/realtime https://twitter.com/OpenAIDevs/status/2052440907933474954

- REPOSTAC#851@ANDREWCURRAN_@OPENAIIntroducing GPT-Realtime-2 in the API: our most intelligent voice model yet, bringing GPT-5-class reasoning to voice agents. Voice agents are now real-time collaborators that can listen, reason, and solve complex problems as conversations unfold. Now available in the API alongside streaming models GPT-Realtime-Translate and GPT-Realtime-Whisper — a new set of audio capabilities for the next generation of voice interfaces.