Thinking Machines introduces Interaction Models for real-time collaboration

Thinking Machines introduced Interaction Models, an AI system that engages users through simultaneous real-time talking, listening, watching, thinking, and collaborating. The company posted its technical approach, early experimental results, and a video demonstration on its blog at thinkingmachines.ai/blog/interaction-models. The demonstration highlights live visual generation and multi-stream exchanges. The work addresses a shift in AI bottlenecks from raw compute or intelligence toward human-AI bandwidth, positioning the models as full-duplex multimodal systems that enable continuous adaptation without turn-based constraints.

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way.

We share our approach, early results, and a quick look at our model in action.

While Lilian is telling a story, the interaction model can track when she is thinking, yielding, self-correcting, or inviting a response; there is no specific built dialogue management system.

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Tessa's quality of life has improved a lot with some nagging.

While Lilian is telling a story, the interaction model can track when she is thinking, yielding, self-correcting, or inviting a response; there is no specific built dialogue management system.

With the model's simultaneous speech capability, Horace has gotten a lot easier to work with recently.

Lili and Martin get some help controlling themselves.

The technical report includes our motivation, early evaluation results, and technical approach.

Lili and Martin get some help controlling themselves.

Sharing our work on full-duplex multimodal models -- real-time interaction that's natural and intuitive without compromising on intelligence.

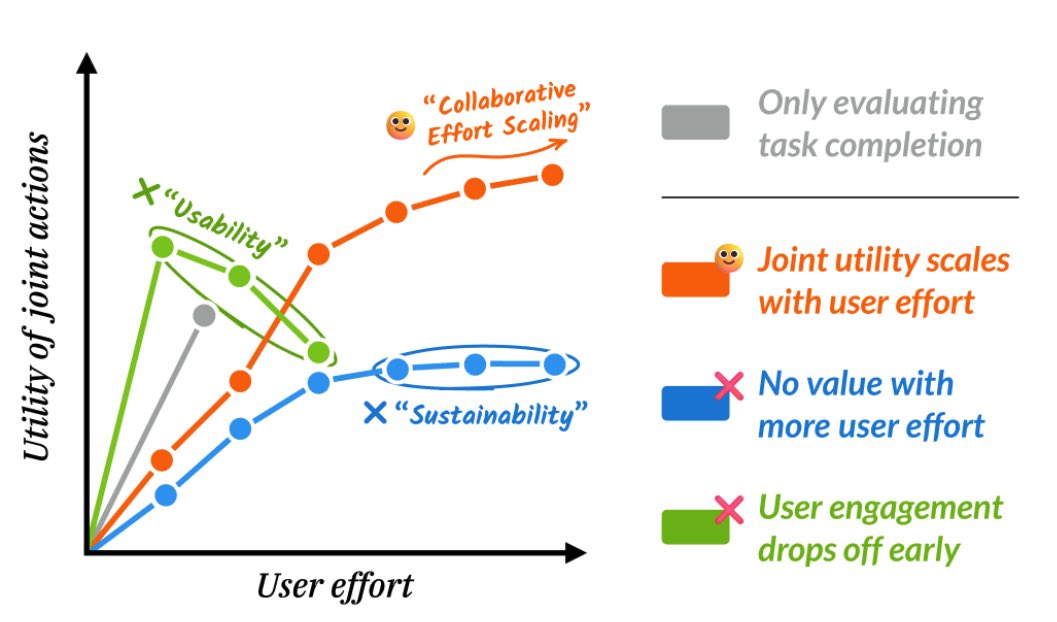

We started Thinky in part to differentially advance capabilities for human-AI collaboration, which are underemphasized relative to intelligence/autonomy because they're harder to eval.

In the future, we think every AI system will have something like an interaction model as the outer user-facing layer, continually keeping the user informed and learning what they actually want.

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Seeing the demos come together over the last week has been awesome -- so many things that previously required a special-purpose model (e.g. real-time translation, event detection in video) turn out to be zero-shot instruction following once you have a general-purpose model with the right type signature -- continuous/simultaneous audio+video+text->audio+text

Sharing our work on full-duplex multimodal models -- real-time interaction that's natural and intuitive without compromising on intelligence. We started Thinky in part to differentially advance capabilities for human-AI collaboration, which are underemphasized relative to intelligence/autonomy because they're harder to eval. In the future, we think every AI system will have something like an interaction model as the outer user-facing layer, continually keeping the user informed and learning what they actually want.

Thinky's secret plan:

1: Increase Human<->AI bandwidth 2: Raise ceiling of human+AI intelligence 3: Help humans continue as main-characters in the new world

We are at Step 1. Interaction Models are great real-time collaborative tools for humans. Here's a preview:

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

In the past few months, we had a lot of fun (and stress 😅) to produce 12 versions (+ many subversions) and 137 pages in our training run log book.

Turns out human-human collaboration is important to improving human-AI collaboration. 😊

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

@soumithchintala congrats Soumith!!

Thinky's secret plan: 1: Increase Human<->AI bandwidth 2: Raise ceiling of human+AI intelligence 3: Help humans continue as main-characters in the new world We are at Step 1. Interaction Models are great real-time collaborative tools for humans. Here's a preview:

Very cool announcement from Thinky!

The model looks nice (they go into some reasonable amount of detail), and reading some parts of the blog you can definitely see that the infea guys had a lot of fun there!

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

@rown @liliyu_lili @saurabh_garg67 @AndreaMadotto Yes but 40 though 🤔

Our interaction model is the first general video+speech model that's visually proactive. It was super fun working on this with @liliyu_lili / @saurabh_garg67 / @AndreaMadotto and others - after countless versions it was amazing when visual interruptions suddenly worked!

@cHHillee Wait didn't you draw a picture almost like this for a blogpost sometime over a year ago?

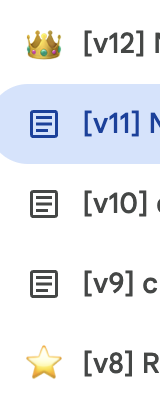

In modern ML accelerators, FLOPS have absolutely exploded. Often though, the bottleneck is not FLOPS but memory bandwidth. Similarly, model intelligence has exploded, causing the bottleneck to be human<->AI bandwidth. At Thinky, we think that it’s important to solve this. 1/4

@johnschulman2 Congrats!

Sharing our work on full-duplex multimodal models -- real-time interaction that's natural and intuitive without compromising on intelligence. We started Thinky in part to differentially advance capabilities for human-AI collaboration, which are underemphasized relative to intelligence/autonomy because they're harder to eval. In the future, we think every AI system will have something like an interaction model as the outer user-facing layer, continually keeping the user informed and learning what they actually want.

Haven’t tried this but it seems very neat…

Yet all of the demos (except maybe one) are the model being fun and/or annoying by correcting or reminding in real time. There are obvious uses for this sort of model in meetings, education, training, etc. Why not demo valuable cases?

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

This is the demo that hits me as being genuinely different -- both model and user talking at once! Great stuff.

Congrats on the release @thinkymachines

With the model's simultaneous speech capability, Horace has gotten a lot easier to work with recently.

@natolambert @thinkymachines Yeah I think concurrency is actually a pretty fundamental property of human interaction! Model talking/listening + user talking, model talking/watching + user doing something on screen (e.g. sports commentary).

This is the demo that hits me as being genuinely different -- both model and user talking at once! Great stuff. Congrats on the release @thinkymachines

To stretch this analogy further, when accelerators (humans) are severely limited by bandwidth you have no choice but to move everything into SRAM (make everything fully autonomous). However, this prohibits say, keeping kv-cache in DRAM (having humans contribute). 2/4

In modern ML accelerators, FLOPS have absolutely exploded. Often though, the bottleneck is not FLOPS but memory bandwidth. Similarly, model intelligence has exploded, causing the bottleneck to be human<->AI bandwidth. At Thinky, we think that it’s important to solve this. 1/4

In modern ML accelerators, FLOPS have absolutely exploded. Often though, the bottleneck is not FLOPS but memory bandwidth. Similarly, model intelligence has exploded, causing the bottleneck to be human<->AI bandwidth. At Thinky, we think that it’s important to solve this. 1/4

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

@giffmana

@cHHillee Wait didn't you draw a picture almost like this for a blogpost sometime over a year ago?

Great series of demos overall, but why are we spoiling a movie lol?

The Babel fish is here

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

We are so back!

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Our interaction model is the first general video+speech model that's visually proactive. It was super fun working on this with @liliyu_lili / @saurabh_garg67 / @AndreaMadotto and others - after countless versions it was amazing when visual interruptions suddenly worked!

We’re interested in AI systems that can collaborate in real time, without relying only on artificial turn boundaries. For audio, this feels natural: listen, speak, interrupt, update. For video, we think an important version of this is visual proactivity — models that respond when something happens visually: “Tell me when I start slouching.” “Count my pushups.” “Say stop when the person stops doing X.”

@liliyu_lili @saurabh_garg67 @AndreaMadotto If you're interested in working on realtime video+speech specifically, or human AI collaboration more generally, please reach out!

Our interaction model is the first general video+speech model that's visually proactive. It was super fun working on this with @liliyu_lili / @saurabh_garg67 / @AndreaMadotto and others - after countless versions it was amazing when visual interruptions suddenly worked!

So many people were bearish on Thinky Machines... they were just quietly building!

Really cool work, TML continues to be one of my favorite neolabs!

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

almost exactly two years ago lol

@soumithchintala That is so awesome. Can't wait to try it.

Thinky's secret plan: 1: Increase Human<->AI bandwidth 2: Raise ceiling of human+AI intelligence 3: Help humans continue as main-characters in the new world We are at Step 1. Interaction Models are great real-time collaborative tools for humans. Here's a preview:

super cool interleaving!!!

While Lilian is telling a story, the interaction model can track when she is thinking, yielding, self-correcting, or inviting a response; there is no specific built dialogue management system.

@lilianweng so cool

In the past few months, we had a lot of fun (and stress 😅) to produce 12 versions (+ many subversions) and 137 pages in our training run log book. Turns out human-human collaboration is important to improving human-AI collaboration. 😊

@johnschulman2 super cool - videos on the blog are neat.

Sharing our work on full-duplex multimodal models -- real-time interaction that's natural and intuitive without compromising on intelligence. We started Thinky in part to differentially advance capabilities for human-AI collaboration, which are underemphasized relative to intelligence/autonomy because they're harder to eval. In the future, we think every AI system will have something like an interaction model as the outer user-facing layer, continually keeping the user informed and learning what they actually want.

@soumithchintala please. my commute just got longer. fix it

Thinky's secret plan: 1: Increase Human<->AI bandwidth 2: Raise ceiling of human+AI intelligence 3: Help humans continue as main-characters in the new world We are at Step 1. Interaction Models are great real-time collaborative tools for humans. Here's a preview:

notable

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

@soumithchintala Dude.

You just posted your secret plan!!

Thinky's secret plan: 1: Increase Human<->AI bandwidth 2: Raise ceiling of human+AI intelligence 3: Help humans continue as main-characters in the new world We are at Step 1. Interaction Models are great real-time collaborative tools for humans. Here's a preview:

Wait, TML is actually cooking??

Interesting model for higher bandwidth human-machine comms

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

@soumithchintala interesting

Thinky's secret plan: 1: Increase Human<->AI bandwidth 2: Raise ceiling of human+AI intelligence 3: Help humans continue as main-characters in the new world We are at Step 1. Interaction Models are great real-time collaborative tools for humans. Here's a preview:

@lilianweng @clarejtbirch Nice. Is this your version of Her?

In the past few months, we had a lot of fun (and stress 😅) to produce 12 versions (+ many subversions) and 137 pages in our training run log book. Turns out human-human collaboration is important to improving human-AI collaboration. 😊

I want more proactivity like this

Could I have it track pigeons that walk by my place and then call the police if it’s more than 10 pigeons?

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

we are excited to share our latest work on interactive human-AI collaboration!

as intelligence increases, we think progress will be bottlenecked by the ability of AI to work *with* humans -- thereby enabling AI to positively impact the long tail of human experiences

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Working on pretraining, it often feels like we are building an intricate physical system complete with dynamical laws and dimensional scaling. I did not expect that using our models would also start to feel like interacting with a dynamical system

(1/2)

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Interaction models continuously absorb information from the world and think and respond in real time. They are aware of the passage of time, know when to listen and can interrupt based on audio or visual cues. I am so excited by all the work being done here at Thinky

(2/2)

Working on pretraining, it often feels like we are building an intricate physical system complete with dynamical laws and dimensional scaling. I did not expect that using our models would also start to feel like interacting with a dynamical system (1/2)

very cool

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Making a Thinky compilation thread for today's announcement.

Making a Thinky compilation thread for today's announcement.

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Sharing our work on full-duplex multimodal models -- real-time interaction that's natural and intuitive without compromising on intelligence. We started Thinky in part to differentially advance capabilities for human-AI collaboration, which are underemphasized relative to intelligence/autonomy because they're harder to eval. In the future, we think every AI system will have something like an interaction model as the outer user-facing layer, continually keeping the user informed and learning what they actually want.

In the past few months, we had a lot of fun (and stress 😅) to produce 12 versions (+ many subversions) and 137 pages in our training run log book. Turns out human-human collaboration is important to improving human-AI collaboration. 😊

Thinky's secret plan: 1: Increase Human<->AI bandwidth 2: Raise ceiling of human+AI intelligence 3: Help humans continue as main-characters in the new world We are at Step 1. Interaction Models are great real-time collaborative tools for humans. Here's a preview:

We started Thinking Machines to advance human-AI collaboration, and this is our first bet on what that looks like. Most labs treat autonomy as the goal and interactivity as scaffolding around a turn-based core. We think the way we work with AI matters as much as how smart it is. Interactivity has to be in the model, and it has to scale with intelligence rather than trail behind it. https://thinkingmachines.ai/blog/interaction-models/

We’re interested in AI systems that can collaborate in real time, without relying only on artificial turn boundaries. For audio, this feels natural: listen, speak, interrupt, update. For video, we think an important version of this is visual proactivity — models that respond when something happens visually: “Tell me when I start slouching.” “Count my pushups.” “Say stop when the person stops doing X.”

In modern ML accelerators, FLOPS have absolutely exploded. Often though, the bottleneck is not FLOPS but memory bandwidth. Similarly, model intelligence has exploded, causing the bottleneck to be human<->AI bandwidth. At Thinky, we think that it’s important to solve this. 1/4

We strongly believe in the bitter lesson. Making interactivity an integral part of the model will outpace any harness-based approach and will lead to a better experience working with AI models. This is a step in that direction.

@soumithchintala Great to see a compelling vision articulated. @humansand is pushing for a similar goal. I'm glad that humans will have a role in the future :)

Thinky's secret plan: 1: Increase Human<->AI bandwidth 2: Raise ceiling of human+AI intelligence 3: Help humans continue as main-characters in the new world We are at Step 1. Interaction Models are great real-time collaborative tools for humans. Here's a preview:

Early days but what’s most impressive is how natural the interactions are becoming with these omnimodels. Real-time, low-latency interactive AI models unlock applications that are very hard to imagine today. Brace yourselves!

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

holy shit they made Her

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Working on the interaction models is a lot of fun at TML! I can't imagine doing that in a turn-based world. Building it from scratch makes a lot of things so much easier. I am very excited about the future of natively multi-modal, multi-stream, multi-task models.

We strongly believe in the bitter lesson. Making interactivity an integral part of the model will outpace any harness-based approach and will lead to a better experience working with AI models. This is a step in that direction.

Working on the interaction models is a lot of fun at TML! I can't imagine doing that in a turn-based world. Building it from scratch makes a lot of things so much easier. I am very excited about the future of natively multi-modal, multi-stream, multi-task models.

If you care about building an AGI future where humans are not left behind, come join us.

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

It's refreshing to see an AI lab go in a different direction! But also, as someone who's obsessed over getting my inverse planning algorithms down to milliseconds of latency, I feel like I'm being gaslit when they caption those 1.5s to 3s delays as "instant reactions" 😵💫

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

The real world is physical, the real world is continuous, the real world is real-time

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

We need this asap

With the model's simultaneous speech capability, Horace has gotten a lot easier to work with recently.

Subtle point: building excellent AI models and agents will likely require us to reconsider organizational structure and communication patterns (of humans!)

In the past few months, we had a lot of fun (and stress 😅) to produce 12 versions (+ many subversions) and 137 pages in our training run log book. Turns out human-human collaboration is important to improving human-AI collaboration. 😊

Thinking Machines launch should be @prozd and @cHHillee using the model to swap places giving talks at GDC and Jane Street

With the model's simultaneous speech capability, Horace has gotten a lot easier to work with recently.

What if working with AI felt less like a chat box and more like talking to another person? Today we're sharing a preview of how we think about humans and AI collaborating together at @thinkymachines, with full-duplex models that handle interactivity natively.

It's still early, and we have a lot more to do ahead of us!

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

“natively multi-modal, multi-stream, multi-task models”, good direction.

We’re interested in AI systems that can collaborate in real time, without relying only on artificial turn boundaries.

For audio, this feels natural: listen, speak, interrupt, update.

For video, we think an important version of this is visual proactivity — models that respond when something happens visually:

“Tell me when I start slouching.” “Count my pushups.” “Say stop when the person stops doing X.”

Tessa's quality of life has improved a lot with some nagging.

My go-to test for visual proactivity in realtime systems is live finger counting.

It sounds simple, but it requires the model to watch continuously, track visual changes, and respond at the right time.

Other models we’ve tried could not do it.

We’re interested in AI systems that can collaborate in real time, without relying only on artificial turn boundaries. For audio, this feels natural: listen, speak, interrupt, update. For video, we think an important version of this is visual proactivity — models that respond when something happens visually: “Tell me when I start slouching.” “Count my pushups.” “Say stop when the person stops doing X.”

Find some joys while pushing Human-AI Collaboration.

and "green tea is ok".

P.S. The demo is basically my life at thinky: I start to cut coffee, @liliyu_lili is visually prompt-injecting my human intelligence with sweet snack every day, and I've gained weight since joining TML.

are you getting it yet? now youre thinking in microturns

last fall, I read Walter Ong's Orality and Literacy twice and on my 40k step walks from Potrero Hill to the Presidio, I couldn't stop thinking about the "Some psychodynamics of orality" section:

- additive rather than subordinate - aggregating rather than analytic - close to the human lifeworld - agonistically toned - empathetic and participatory rather than objectively distanced - homeostatic - situational rather than abstract

then I had about 4 months of When We Cease to Understand the World level psychosis, where I repeatedly accused anybody I could of not being responsive enough and not being collaborative and being too objective and not touching the world in a high frequency high fidelity way, lost in their modern plato's cave of literary sauce.

in January, I explained my job as the guy that makes the sous vide machine do weird things it wasn't meant to do so that the chefs I work with can make the best dish of their lives and that someone told me actually that role exists at Lazy Bear and it was what created their asparagus dish:

turns out sous vide machines are designed assuming they would only ever be used with water:

- the motor expects certain viscosity - no way to clean insides this tool design constrains the chef; he cannot sous vide asparagus in asparagus juice

historically - immersion blenders, vitamix -> era of purees - cheap nitro -> foams

in the same way training runtimes are designed shapes the path of AI: - chat is turn based, now training is turn based, there's no synchronicity, there is no time, reality freezes - chat is turn based, what can you scale? ok scale the model turn -> cot -> o1 - and now here we are, sitting on our thumbs, waiting for claude

the shape of a tool is what it enables a creator do an intelligence transcends those limitations

so in a desperate attempt to end my psychosis, we went katabatic, wrote a bunch of rust, argued a lot with @_alex_kirillov_

and saw a bunch of the best chefs in the world begin eliciting flavors and textures I have never experienced before

here are some

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Very excited to share a preview of what we’ve been working on!

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

My first share since joining @thinkymachines. Fun working with this team on real-time multimodal interaction. Vision in turn-based models felt like flipping through photos — continuous video is a different problem. Visual proactivity is essential — grateful to have worked on this alongside @liliyu_lili, @rown , and the rest of the team!

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

I'm excited to share some of our work at @thinkymachines. As models get more intelligent, the bottleneck is increasingly how quickly and seamlessly we can access their intelligence, and today we are sharing a preview of how we think about human-AI collaboration.

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

I was eating at a restaurant when it hit me: many people in the world are never going to get used to vibe coding in a terminal or having multi-turn conversations through an app. It's probably one of the biggest bottlenecks to AI diffusion across the rest of the population. That's when @thinkymachines' mission really hit me — and so did the value of a real-time interaction model. Solving this problem is how AI can reach everyone, from all walks of life.

Earlier this year I joined @thinkymachines after a year scaling RL at xAI. Super excited about building the future of human–AI collaboration. As we scale AI intelligence, the need for humans to understand and collaborate with it only grows. If you're excited about that future too, join us!

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Step one to breaking the intelligence curse: increase human<>AI bandwidth, so we can stay in the loop.

I joined Thinky a few weeks ago, and have been grateful to see how much the people here care about regular people winning from AI. Watching the last mile of this effort made that clear to me.

We're just getting started.

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Congrats Rowan and Thinky team on the cool release!

I remember you mentioned having a v different vision of multimodal interactions a few weeks ago @rown so this is what that looks like! 🆒

It’s exciting to see this release going beyond just a single model, showcasing truly different native multimodal interactions too.

A couple things from the nicely written blog really resonate with me: 1. people are most effective when they can collaborate with AI the same way they do with other people 2. existing interfaces limit human inputs (esp multimodal ones) to the model, and this input limit needs to be lifted to unlock much better interactivity

The blog also reminds me of the fun and challenging discussions with @shannonzshen and others on what “scaling collaboration” can look like. we made an initial attempt describing our vision: https://arxiv.org/pdf/2510.25744

It’d be great to see more human centric evaluations of the model/system/interface too — looking forward to it🥂

We are so back!

Congrats to @thinkymachines on the release of TML-Interaction-Small and tying for the top spot on our Audio MC S2S leaderboard! 🥇

Their interaction model scores a 43.4% APR, demonstrating an impressive level of intelligence and long-context awareness compared to existing full-duplex models, without losing responsiveness in conversation.

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. https://thinkingmachines.ai/blog/interaction-models

Thinky’s new interaction models perform search in the background when listening and responding so you don’t notice!

Also per request: Spoiler Alert 🚨

2. (Real time fact checking) - The Interaction Models hear you speak and fact-checks you in real time — like having a teammate who's always paying attention.