Perceptron AI releases Perceptron Mk1 multi-modal model

Perceptron AI released Perceptron Mk1, a multi-modal model for frontier video understanding and embodied reasoning. The model was developed over 16 months with custom recipes optimized for physical-world performance. Demonstrations show it extracting highlights from overhead soccer footage and generating robotic arm optimization recommendations amid factory obstructions. The release is the company's first public model and is distributed via Hugging Face.

@ArmenAgha It was one of the main/coolest features of OWL-ViT quite a while ago. Luckily you said almost all 😁

Pointing by example is a surprisingly useful capability almost all multimodal models except ours get wrong.

Pointing by example is a surprisingly useful capability almost all multimodal models except ours get wrong.

I'm excited to finally release the fruit of the research we've been doing at Perceptron for the last 16 months: Perceptron Mk1. We've been developing multi-modal recipes from the ground up to build models that perform best in the physical world, from video understanding to embodied reasoning to robotics. Mk1 is our scaled up recipe.

Today we're releasing Perceptron Mk1: frontier video and embodied reasoning.

Mk1 is incredibly cost effective. It performs at par with Gemini-Flash/Gemini-ER as well as the larger open source Qwen models on all perceptive/physical AI tasks, at a fraction of the cost ($0.15/M input, $1.50/M output).

I'm excited to finally release the fruit of the research we've been doing at Perceptron for the last 16 months: Perceptron Mk1. We've been developing multi-modal recipes from the ground up to build models that perform best in the physical world, from video understanding to embodied reasoning to robotics. Mk1 is our scaled up recipe.

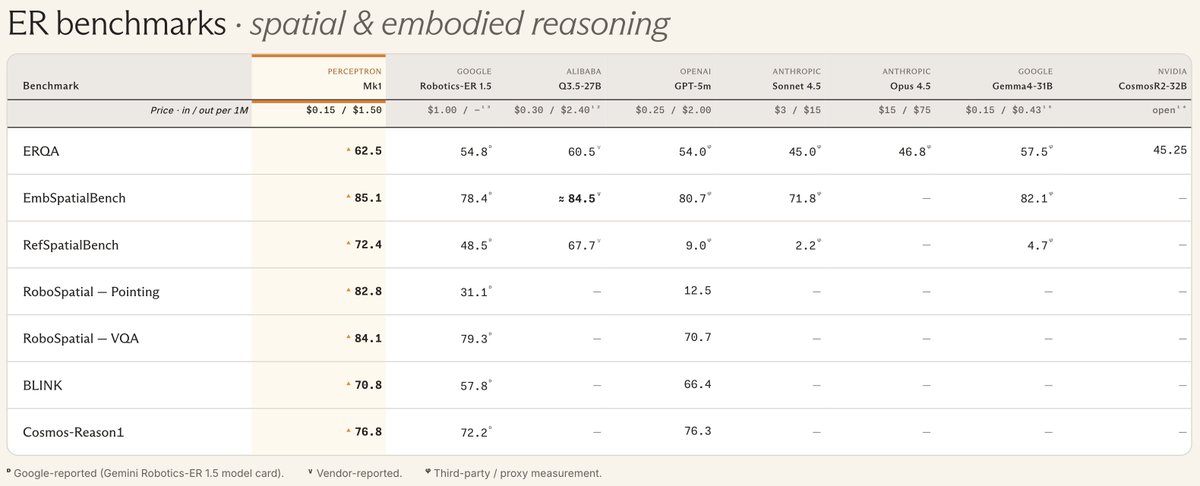

On Embodied Reasoning tasks, Mk1 hits the frontier while running cheaper and faster.

Mk1 is incredibly cost effective. It performs at par with Gemini-Flash/Gemini-ER as well as the larger open source Qwen models on all perceptive/physical AI tasks, at a fraction of the cost ($0.15/M input, $1.50/M output).

On Embodied Reasoning tasks, Mk1 hits the frontier while running cheaper and faster.

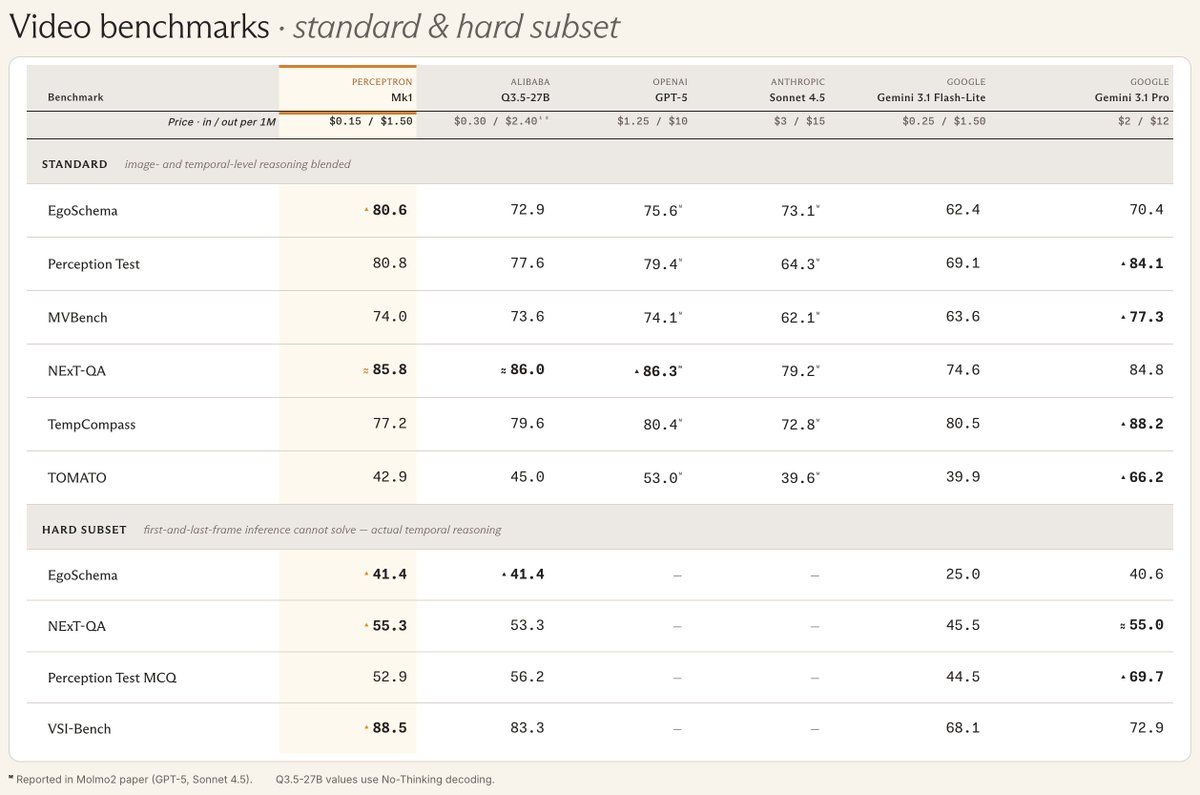

Video understanding is best in class.

Video understanding is best in class.

Try it: http://demo.perceptron.inc

Interested in the weights? We'll be opening a small partners program for folks to get direct access to the model. DM me.

We're throwing a release party in Bellevue, lined up with MLSys. Thursday May 21, 6 to 9pm; food, drinks, RSVP at https://partiful.com/e/bsC2wZxXPhFiDbMynb5l

I'm excited to finally release the fruit of the research we've been doing at Perceptron for the last 16 months: Perceptron Mk1. We've been developing multi-modal recipes from the ground up to build models that perform best in the physical world, from video understanding to embodied reasoning to robotics. Mk1 is our scaled up recipe.

@ArmenAgha @Scobleizer Congrats, very cool model!

I'm excited to finally release the fruit of the research we've been doing at Perceptron for the last 16 months: Perceptron Mk1. We've been developing multi-modal recipes from the ground up to build models that perform best in the physical world, from video understanding to embodied reasoning to robotics. Mk1 is our scaled up recipe.

new architecture ideas coming out of ai startups are cool as hell

I'm excited to finally release the fruit of the research we've been doing at Perceptron for the last 16 months: Perceptron Mk1. We've been developing multi-modal recipes from the ground up to build models that perform best in the physical world, from video understanding to embodied reasoning to robotics. Mk1 is our scaled up recipe.